ABSTRACT:

Synthetic data is generated from computing models and simulations of real-world situations. The support and interest for synthetic data sets have been growing with the demand for large data sets to train AI modules and recent developments in data synthesis technology. From improving data access for AI and ML applications to eliminating research roadblocks posed due to data restrictions imposed by GDPR, HIPAA, and other contemporary regulations, synthetic data solves multiple problems. It also improves the quality of existing databases by providing diversity and filling data gaps. Data scientists have started using synthetic data for exploratory analysis, validation, and data substitution in all types of research projects. Synthetic data generation can also bring theatrical economic transformations and create startling opportunities with its immense business applications. Businesses and big IT giants now trust synthetic data and are creating utility frameworks that support synthetic data applications.

Goodfirms’ research titled ‘Synthetic Data - Use, Purpose, Challenges, and its Future Applications’ forays into the utility of disruptive technologies such as AI-generated synthetic data to counter and address the concerns surrounding data privacy, scalability, and preservation. The research will identify and analyze potential use cases, challenges, and areas of future applications with synthetic data.

Table of Contents:

Introduction

An Overview of Synthetic Data

- What is Synthetic Data?

- Synthetic Data Generation Models:

- Types of Synthetic Data

- Synthetic Data Overcomes the Limitations of Real-World Data

- How Secure is Synthetic Data?

- Advancing Facial Recognition Technology (Deep Fakes)

- Clinical Trials

- Training AI for Detecting Fraudulent Transactions

- Autonomous Vehicles

- Synthetic Data in Financial Markets for Trading, Portfolios, and Risk Management

- Synthetic Data Usage in Ecommerce

- Synthetic Data for Customer Data Analytics

- Software Testing

- Eliminating Bias Associated With Real-World Data

- Quality-Control: Advanced Testing for Zero-Defect Manufacturing

- Human Resources

- Cross-Border Data Sharing

Challenges With Synthetic Data

- Synthetic Data: A promising technology with inherent risks

- Costly Investments Make Synthetic Data Out of Reach for Many Businesses

- Accuracy is Hard to Determine

- Inference Challenges if Critical Personal Identifiable Information is Removed

- Acceptance Challenges

- Misuse

- Lack of Skilled People to Handle the Synthetic Data Generation Process

Future Applications of Synthetic Data

- Synthetic Data Will Emerge as a Key Resource for AI Developments

- Product Developments Using Synthetic Data

- Synthetic Data Set to Change the Realms in Robotics

- Testing Critical Cloud Migration and Update Environments

- Immense Future Applications in Agricultural Innovations and Practices

- Addressing Data Intensive Metaverse Deployments

Introduction

The unprecedented availability of consumer data that contains information about their preferences, behaviors, and characteristics drives business research and marketing innovations. However, companies that gather such volumes of consumer data can find themselves in legal, ethical, and financial trouble without assiduous data governance. In a consumer world marked by extreme concerns regarding data privacy and confidentiality, synthetic data emerges as a solution to ‘emulate data and draw valid inferences’ without risking user privacy. Also, market researchers often need humongous data to validate hypotheses and unearth patterns and trends. Small datasets are not compatible with research analysis. Synthetic data that can be scaled to any extent solves this issue.

The study ‘Synthetic Data - Use, Purpose, Challenges, and its Future Applications’ attempts broad research into the realms of the synthetic data world and seeks to identify the current usages, objectives, challenges, and future directions in which synthetic data can evolve. This report also provides a detailed overview of synthetic data, its current business value, and how it can solve AI scalability issues.

An Overview of Synthetic Data

What is Synthetic Data?

Synthetic data is generated by AI-based algorithms trained on high-quality, real-world data. It retains the predictive capacities, statistical properties, and analytical patterns of the original datasets. Artificial intelligence can generate all types of data forms, such as binary, categorical, numeric, continuous, discrete, qualitative, quantitative, ordinal, etc. AI-powered tools are capable of generating more complicated and sophisticated data forms such as big data, time-stamped data, unstructured/structured data, machine data, spatiotemporal data, and many more.

The ‘Lorem Ipsum’ placeholder text is one of the most common examples of synthetic data. It allows designers to place text on visual graphics. There are multiple other reasons that necessitate data researchers to create and use data that is open, doesn’t contain sensitive values, and still represents real-world observations.

Synthetic data has evolved from dummy data sets used in statistical projects to real-time data sets that are used in privacy-compliant business environments. The AI-based algorithms that generate synthetic datasets have become highly sophisticated with advancements in technology and can create voluminous datasets that match the attributes of real data. Synthetic data is a potential solution for pharmaceuticals, finance, banking, eCommerce, and IT sectors that struggle to scale their AI capabilities.(1)

Synthetic Data Generation Models:

Synthetic data creation is a complicated process, and the quality of synthetic data is also related to the synthesis model used.

Synthetic data is artificially modeled from original data and retains statistical properties, structure characteristics, and other attributes embedded in the original data. Businesses and data researchers can use processes such as probability distribution, multi-agent simulations, or GAN (Generative Adversarial Networks) to create synthetic data based on the requirements.(2)

The European Data Protection Supervisor, an official website of the European Union, asserts that the utility of any method/model depends on the degree to which it can create an accurate proxy for the real data.(3)

For instance, the probability distribution method is appropriate for stock portfolios and future price predictions, whereas robots, drones, self-driving cars, and their simulations will require multi-agent simulation techniques. The GAN model of synthetic data generation is mostly used for more advanced creations such as AI-generated images or deep fakes.(4)

Types of Synthetic Data:

Full Synthetic

Fully synthetic data sets use multiple imputation frameworks and fill in missing datasets with artificially created substitute values. Fully synthetic data is created from random inputs and doesn’t retain any attribute of the real data.

Partial

The partially synthetic data is created by imputation frameworks that replace some values in a dataset with artificially generated data. Only certain sensitive or targeted values are altered to prevent disclosure or address privacy challenges.

Hybrid

Hybrid synthetic data combines the values from both real and synthetic data. It retains the qualities of both types of data and offers better data insights.

Synthetic Data Overcomes the Limitations of Real-World Data

Improving machine learning models, creating data for third-party analysis, or testing an existing system are some of the reasons we need synthetic data.

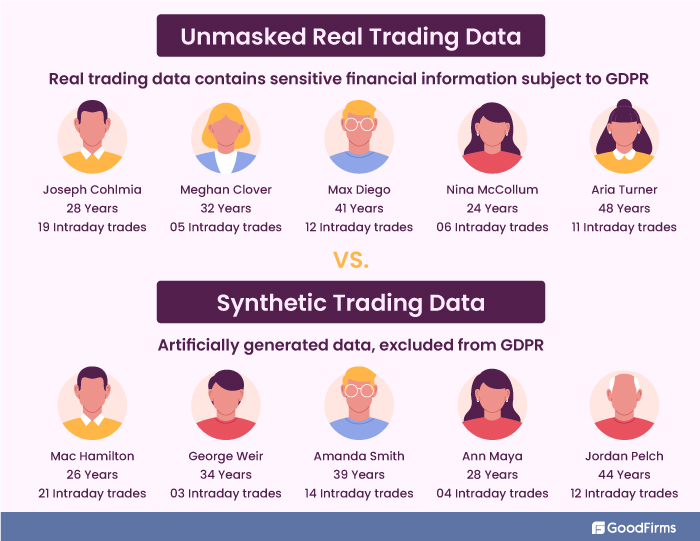

AI/ML models have the potential to solve critical business problems. However, the solutions may need insights achieved from analyzing personally identifiable data. Regulations such as GDPR prohibit researchers from accessing or using personally identifiable data. GDPR protects some fields and online identifiers such as names, race, ethnic origin, political inclination, religious or philosophical belief, genetics, bank details, biometrics, sexual orientation, postcode, age, gender, etc.(5) Now, as privacy laws and regulations such as GDPR prohibit companies from collecting personally identifiable data, the role of synthetic data becomes prominent.

The growing demand for privacy and optimization of data utility drives the synthetic data revolution.

Also, we need synthetic data to solve the limitation issues associated with real data. The developments in machine learning and deep learning are leading the exponential growth in data extraction and analysis technologies. Businesses are able to collect vast amounts of data in no time with computational technologies and data extraction tools. However, data creation in the real world has limitations. Data in the real world is not infinite, and the current exponential rise charts will soon turn into sigmoid ones. However, synthetic data is not bound by any such limitations.

How Secure is Synthetic Data?

Synthetic data enables fair, responsible, and safe usage of sensitive information.

The algorithms that use generative processes to create synthetic data deploy data obfuscation techniques to eliminate security concerns. For instance, any sensitive data will be deprived of certain sensitive attributes that may lead back to the real data. Anonymization of content is a popular technique that prevents any mapping back to real data.

Synthetic Data Use Cases

Advancing Facial Recognition Technology (Deep Fakes)

Synthetic data can generate non-identifiable datasets that can be employed in a privacy-safe environment for sensitive fields such as facial recognition technology. The AI-based deep learning algorithms involving generative neural network architectures can be fed with synthetic data for training purposes to address privacy concerns in facial recognition technology.

Deepfake technology synthesizes human faces to create replicas in image/video forms. Using synthetic data for AI training purposes can expedite the deep fake creation process. In February 2019, Philip Wang, a data engineer, created a GAN (generative adversarial network)(6) that displays a new artificially generated face every time a user refreshes the page. The GAN is based on software that was developed in 2018 by Nvidia researchers.

Clinical Trials

A recent research study(7) in April 2021 compared the clinical trial analysis results obtained from real-world data and synthetic data. Synthetic data usage is valid in clinical trials and can expedite trials as the data doesn’t require ethics reviews and is devoid of sensitive patient information.

The National Institutes of Health in the U.S. collaborated with Syntegra, an IT startup, to synthetically generate a nonidentifiable replica of the NIH’s database of COVID-19 patient records. The synthetic data set comprises more than 2.7 million screened patients and 413K COVID-positive patients. The synthetic data will be shared with researchers across the globe for a better understanding of the disease.(8)

Training AI for Detecting Fraudulent Transactions

Government agencies and enforcement departments track global online transactions, conversations, etc., to find red flags related to illegal activities. Government intelligence systems need to be trained to find anomalies and suspicious transactions/conversations. Synthetic data helps federal agencies train their AI software to detect fraudulent transactions, suspicious transactions, terror-funding cases, etc.

Autonomous Vehicles

When it comes to self-driving cars, there's a growing concern over whether they can understand the world around them and make accurate judgments. For example, even sophisticated algorithms are poor at recognizing objects when they are blurred or moving fast. Currently, there are no standards for the technology used in self-driving cars; however, there are tremendous efforts underway to create guidelines for manufacturers and train cars with extreme datasets. One potential solution is to use synthetic data that allows computers embedded in self-driving cars to learn from synthetic datasets and build models that can be deployed in real-world traffic situations.

Synthetic Data in Financial Markets for Trading, Portfolios, and Risk Management

Financial giants such as JP Morgan have already devised systems that compare metrics of real-world data with synthetic data sets.(9) The synthetic data is used for multiple purposes in the financial world, including creating datasets that can detect money laundering, data for equity market analysis, simulating customer interactions, and overcoming privacy issues related to personally identifiable financial data. Synthetic data can generate future patterns by analyzing historical data and evaluating past patterns to predict the future movements of stocks. It is used to devise AI-based trading strategies confirmed after testing synthetic data.

Synthetic data use cases in banking include advanced analytics, the credit assessment, fraud detection, cyber security monitoring, etc. While synthetic data retains granular insights of original data, it eliminates privacy issues related to real-world banking data.

Synthetic Data Usage in Ecommerce

E-commerce companies are increasingly using AI-based recommendation modules to provide personalized buying experiences, improve pricing decisions, forecast demand, etc. The algorithms run and train themselves on large data sets generated from previous customer interactions. E-commerce businesses can augment their data sets and scale them further for better insights by using synthetic data generation techniques. Ecommerce also uses images to display products. Synthetic data can be used to create human-like images, particularly for the clothing segment. In cooperative promotions between brands, synthetic data enables the exchange of critical information without risking the privacy of users.

Synthetic Data for Customer Data Analytics

Modern businesses strive to understand consumer patterns, customer behavioral trends, and buying habits to influence consumer decisions and provide customized services. Personalized services and hyper-targeted promotional efforts require data analytics based on real-time and high-quality datasets. Synthetic data can help businesses uncover new trends and accurately segment customer campaigns by providing deeper insights into consumer behavior. Synthetic data is also immune to legacy issues in statistical analysis, such as 'skip patterns' or 'non-response.'(10)

Software Testing

Every application has bugs, and bugs cost money and application stability issues. It is critical to test applications before releasing them. Testing each software component and the product using real-life data is often difficult, as real-life data usually contain personal information and are thus subject to regulations such as GDPR.

A recent instance of GDPR fines for using personal data for testing:

In 2021, the Norwegian data protection authority fined the Norwegian Olympic and Paralympic Committee and Confederation of Sports ('NIF') approx €124,430) for disclosing personally identifiable information of 3.2 million individuals online following an error that occurred when testing a cloud solution.(11)

Synthetic data can be used for testing and maintaining consistency across different applications. Synthetic data includes test data and metadata that help to track changes and maintain consistency across different applications. Synthetic data can be used to populate databases with test data.

It has become critical for both big and small companies to increase deployment frequency while reducing production issues by adopting DevOps best practices. Continuous testing is one of the core components of achieving this outcome. Software developers can create a testbed for more granular experiments with a limitless flow of synthetic data and thus eliminate problems associated with updates and new releases of their software solution.

Eliminating Bias Associated With Real-World Data

There are multiple real-world scenarios where data generated is mired in bias. For instance, if AI is trained on data-related to hiring qualified candidates for the post of CFO and it is fed with data that reveals only 15% of CFOs as women and the rest as males; the AI will imbibe the intrinsic bias that men are more qualified to become CFOs and will prioritize the CVs of males over females. This erroneous fallacy can lead to discriminatory hiring decisions.

Companies use artificial intelligence to get rid of the subjective errors made by humans, and with biased data, AI is bound to repeat the same mistakes. This is where synthetic data feeds can work to eliminate the bias. Synthetic data can balance the underrepresented entities in a given dataset and fix the imbalance and biases.

Quality-Control: Advanced Testing for Zero-Defect Manufacturing

The manufacturing industry strives to reduce faulty production and eliminate defective manufacturing to achieve financial and sustainability goals. Synthetic data can be used for production model developments to achieve a Zero-defect manufacturing environment. Synthetic data enables advanced quality control and testing by assisting in identifying any anomalies that may lead to defects.(12)

Human Resources

Recruitment software solutions trained on synthetic data can make talent sourcing more diverse, equitable, and quality-oriented. Synthetic data can be created to train algorithms that can better identify roles, skills, functions, experience, personal attributes, job profiles, etc., from candidate databases and online resources.

Cross-Border Data Sharing

Today’s businesses are connected globally. However, with stringent data regulations and different privacy standards in different countries, it becomes difficult for organizations to share real-time data with subsidiaries, technology partners, clients, and peers. Also, when data is sent outside, it is susceptible to cyber-attacks. Devoid of personal attributes, the anonymity of synthetic data makes it a perfect solution to cross-border data sharing problems.

Challenges With Synthetic Data

Synthetic Data: A promising technology with inherent risks

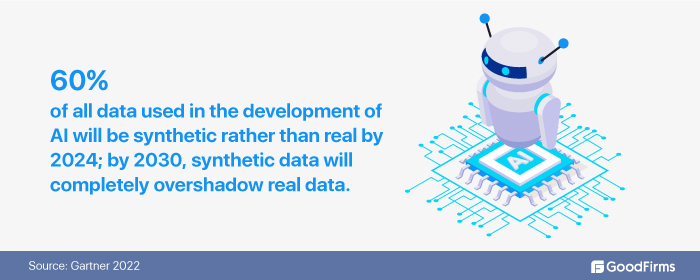

Real data is the most preferred and trusted source of insights. However, due to its sensitive nature, it is expensive, inaccessible, or unusable due to privacy issues. Synthetic data has promising potential to mitigate the issues related to real data. It can also be used as a supplement to existing datasets. With data augmentation and anonymization techniques, businesses can inject synthetic data into their AI models for training purposes. Synthetic data is a promising solution to enhance machine learning models' stability, speed, and performance. The future of artificial intelligence is synthetic data, says Gartner.(13)

However, synthetic data risks are real too. There are inherent risks related to the quality and functionality of synthetic data results. Additional models for the verification of synthetic data (comparing synthetic data with human-annotated data) will be required in the future. Also, the current perception of artificial data as fake or inferior to real data will have to be mitigated for synthetic data to gain broader adoption.

The key challenges associated with synthetic data are:

Costly Investments Make Synthetic Data Out of Reach for Many Businesses, Especially the Startups

Synthetic data runs on high-end generative models that are generally very costly. It also requires expensive hardware and the supervision of data scientists. The costs may be prohibitive for budget-constraint organizations. Also, it may require many trials to generate the final and accurate synthetic data sets. Expensive investments in computing power, highly skilled data engineers, and costly AI-based synthetic data generator tech such as GAN make synthetic data out of reach for many businesses.

Accuracy is Hard to Determine

The quality of synthetic data largely depends on the reliability of the synthetic data generator along with the quality of AI, input datasets, and algorithms used in the process. The accuracy of synthetic data is hard to determine unless businesses have real-data samples to compare with it. The overall quality largely depends on the data used to train the algorithms.

Inference Challenges if Critical Personal Identifiable Information is Removed

In privacy-constrained business environments, Synthetic data is created by stripping any personally identifiable information (names, addresses, license numbers, cell phone number numbers, biometrics data, etc.) from a real dataset. Now, if personal data is critical for better data analysis and drawing inferences, then eliminating those parameters can cause analysis issues. Replacement of such essential data sets with synthetic data may not bring accurate results.

Acceptance Challenges

Synthetic data is a relatively new concept. It is yet to test the acceptance from all quarters of businesses and varied sectors. Due to accuracy issues and credibility factors, sectors like defense, pharma, and stock markets may not easily accept synthetic data.

Misuse

The extreme flexibility associated with synthetic data makes it a potential candidate for misuse too. Without accurate validation, there is a high chance that manipulation of synthetic data for illegal usage can occur. Smart data engineers can deliberately feed data to create synthetic data that fits their mercenary or malicious goals.

Lack of Skilled People to Handle the Synthetic Data Generation Process

There is a dearth of data engineers and software professionals who are well-versed in all synthetic data processes such as embedding, sequential synthesis, anonymization, data distribution, Hellinger distance, bivariate correlations, etc.

Future Applications of Synthetic Data

Synthetic Data Will Emerge as a Key Resource for AI Developments

Training AI systems requires a voluminous amount of data. It takes a lot of time to collect, sort, clean, and annotate data for potential AI usage. Moreover, it is difficult to train AI in cases of data shortages. Future data scientists will exponentially harness synthetic data's power to train their AI. Synthetic data is bound to substitute its real-life counterpart and in a much-upgraded version.

Data is the fuel on which AI algorithms run. AI performance improves automatically if the training data is ample, high-quality, and relevant. As synthetic data models are witnessing high growth, their data output quality will increase further, and better results will follow.

Product Developments Using Synthetic Data

Companies are increasingly relying on research data to bring innovative products to market. Businesses that rely on other vendors for collaborative product development have to share sensitive research data with the vendors. Sharing actual market research data that contains customer information can be risky as vendors might misuse it or sell it to competitors. Synthetic data can solve this problem. In the future, businesses will populate databases with synthetic data for riskless data sharing.

Synthetic Data Set to Change the Realms in Robotics

In robotics, the training of robots for manufacturing, warehousing, or logistics utilization requires simulations using a variety of environments and several thousand training datasets. Synthetic data has capabilities to 1000x the current data and ensure that robots adapt to simulations.(14) Synthetic data has just begun its journey in the robotic vision field, and the future applications are endless.

The advancements in the massive amount of computing power and storage capabilities have powered innovations in robotics. The solutions offered by deep learning and neural networks, along with computing power and data density, enable products like Alexa, Siri, or autonomous vehicles like Tesla cars. The amount of computer processing power that can be applied to training modules has increased exponentially in the past few years. The challenge, however, lies in getting high-quality data to train the modules.

“In our fulfillment centers, every time a robot initiates a grasp of a package, it needs to identify and plan how it is going to use its actuators or move around the room. The more data you provide to the training module, the more accurate it becomes. Even with the billions of packages we ship, we still don’t have enough pictures of the packages in enough random positions. So, for amazon robotics, we ended up with this idea of synthetic data generation,” says Bill Vass, The VP of Engineering at AWS.(15)

Testing Critical Cloud Migration and Update Environments

When organizations move from legacy systems to the cloud or change their current cloud environments, data-related risks are involved. Currently, all migrations are carried with actual data (backup is taken before migration). Organizations have to share their real data with cloud service providers before they can utilize the services.

Now, even if the migration fails, the cloud service provider has access to the real data of the organization. Synthetic data provides an alternative to sharing real data for testing cloud migration. In the future, businesses will test such migrations with synthetic data, and their real data will not be shared initially with third-party cloud providers.

Immense Future Applications in Agricultural Innovations and Practices

The state-of-art computer vision technology is already applied for yield prediction, phenotyping, crop disease detection, weed identification, growth monitoring, etc. Complex datasets in various formats are required for accurately training the AI used in the agricultural domain. Synthetic data is a potential resource for feeding AI that increases agricultural productivity and output.

Synthetic data will support yield performance, production optimization, farm-specific decision-making, and nutrient concentration parameters.(16) Synthetic data models will also address data efficiency challenges in multi-modal sensor machines.(17)

Addressing Data Intensive Metaverse Deployments

Metaverse deployments will be data intensive. To recreate virtual versions of real-life environments, AI will require training with high-quality data in 3D environments. Synthetic data will be a key enabler for such recreations. Major advancements in the AI landscape and the synthetic data revolution will open the floodgates for metaverse-based applications across an array of industries.

Key Findings

- Synthetic data overcomes the limitations of real-world data.

- Synthetic data enables fair, responsible, and safe usage of sensitive information.

- Synthetic data can be used for production model developments to achieve a Zero-defect manufacturing environment.

- Synthetic data can generate non-identifiable datasets that can be employed in a privacy-safe environment for sensitive fields such as facial recognition technology.

- Synthetic data usage is valid in clinical trials and can expedite trials

- Synthetic data can be used in financial markets for trading, portfolios, and risk management.

- Synthetic data can be used in eCommerce to develop more personalized customer experiences.

- Recruitment software solutions trained on synthetic data can make talent sourcing more diverse, equitable, and quality-oriented.

- Software developers can eliminate problems associated with updates and new releases of their software solutions with continuous testing with synthetic data.

- Synthetic data is a potential solution to mitigate privacy and security issues associated with cross-border data sharing.

- Synthetic data, if used efficiently, can eliminate multiple biases associated with real-world data.

- The top challenges associated with synthetic data are its potential misuse, costly investments, accuracy, inference, and acceptance challenges.

- Marketers will use synthetic data more for product developments.

- Synthetic data usage for testing critical cloud migration and updating environments will increase in the future.

- Synthetic data will emerge as a key resource for artificial intelligence developments.

- There are immense applications of synthetic data in agriculture to increase production, detect diseases, and prevent crop failures.

- Synthetic data in robotics can enhance work productivity in warehouses, improve logistics, and increase manufacturing output.

Conclusion

The world is heading towards a synthetic data economy where intelligent systems are trained on datasets acquired from AI-based data synthesis. From generating marketing imagery to simulating metaverse, synthetic data provides the resources that real data lacks. The synthetic data ecosystem has evolved over the years. Today, it is a sophisticated entity that can solve multiple issues in data-intensive business environments.

Our research concludes that synthetic data will be used everywhere in the future, from creating software solutions and super apps with high-dimensional data inputs to developing algorithms for automated sales and marketing. Organizations that explore and adopt the synthetic data premise and real data can achieve more from their overall data strategy.

References:

- https://www.forbes.com/sites/robtoews/2022/06/12/synthetic-data-is-about-to-transform-artificial-intelligence/

- https://www.oreilly.com/library/view/accelerating-ai-with/9781492045991/

- https://edps.europa.eu/press-publications/publications/techsonar/synthetic-data_en

- https://www.reworked.co/information-management/the-role-of-synthetic-data-in-emerging-digital-workplace-technologies/

- https://gdpr-info.eu/

- https://www.mdpi.com/1424-8220/20/19/5481/htm

- https://thispersondoesnotexist.com/

- https://pubmed.ncbi.nlm.nih.gov/33863713/

- https://www.syntegra.io/news/syntegra-partnering-with-national-institutes-of-health-nih-and-the-bill-and-melinda-gates-foundation-to-democratize-access-to-the-largest-set-of-covid-19-patient-records

- https://www.jpmorgan.com/synthetic-data

- https://www.synthesized.io/reports-and-whitepapers/top-10-synthetic-data-use-cases-and-applications-for-2022

- https://www.dataguidance.com/news/norway-datatilsynet-fines-nif-nok-12m-disclosing

- https://www.gartner.com/en/newsroom/press-releases/2022-06-22-is-synthetic-data-the-future-of-ai

- https://www.mdpi.com/1424-8220/20/19/5481/htm

- https://twimlai.com/podcast/twimlai/synthetic-data-generation-for-robotics-with-bill-vass/

- https://onlinelibrary.wiley.com/doi/full/10.1111/1477-9552.12505

- https://food-manufacturing.berkeley.edu/yield-sensing-and-forecasting/